Theoretical basis of AI in urban systems

AI can assist in addressing many issues in urban systems by detailed and extensive sensing of urban environments10. Deep learning11 is a branch of machine learning that utilizes deep neural networks to learn and represent complex patterns in data, which can be employed for tasks such as fine object recognition. Natural language processing12 focuses on how computers understand and process human language, which can be applied to analyze and extract insights from urban-related textual data, such as social media data. Reinforcement learning13 is a learning paradigm that aims to train intelligent agents by interacting with the environment to learn optimal action strategies, which can be utilized to optimize decision-making in such areas as urban transportation systems and energy management. These theoretical bases enable the use of AI technology to analyze and address problems in urban research, providing deeper insights and better knowledge to support decision-making.

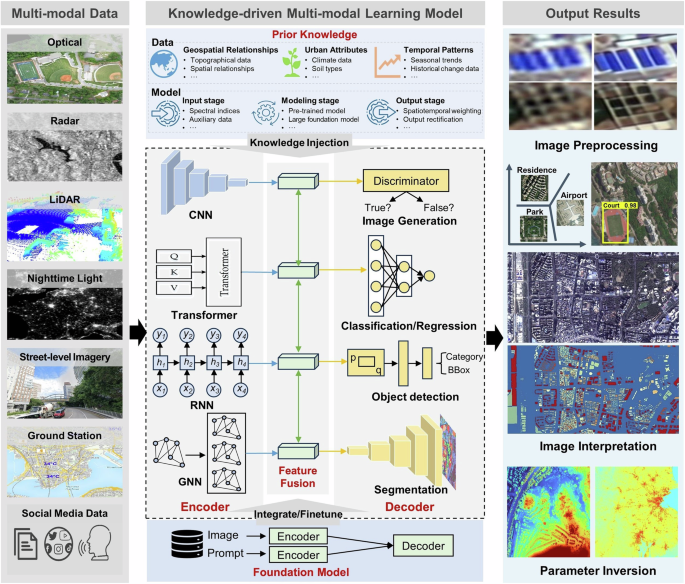

The power of AI in urban research lies in its ability to process multiple types of data, analyze complex patterns, and make informed predictions, which is crucial for understanding complex urban systems. The application of AI methods and techniques usually considers many factors, including research tasks (e.g., image classification, object detection, etc.), the modality of data (e.g., optical, radar images, etc.), the hardware (e.g., graphics processing unit) and platform (e.g., local, distributed, or cloud computing)14, the selection of models, the construction of networks, and the validation of results. The joint use of multimodal data should be carefully considered in the construction of networks. The criteria for model selection depend on the specific task, data, and the desired output. The construction of networks is not yet unified and explainable. Therefore, a general framework and guidelines for selecting models, constructing networks, and validating results are needed to fully leverage the potential of AI in urban studies.

Digital image processing

In digital image processing, Convolutional Neural Network (CNN) is widely used due to its powerful ability of local feature extraction15. For sequential or time-series data, Recurrent Neural Network (RNN) is popular16. Recently, a new AI model, transformer, has achieved great advances in natural language processing, and has been successfully transferred into the image processing field. Compared to CNN and RNN, the transformer entirely consists of attention mechanisms and can model long-range dependency between input and output at much lower training cost17. Fueled by the rapid development of hardware, big data, and AI techniques, foundation models based on transformers have been successfully proposed for general purposes and can be readily applied to various downstream tasks18. In the field of remote sensing, foundation models have increasingly received wide concerns, e.g., Prithvi19 and RemoteCLIP20. These foundation models point out a promising direction for dealing with multi-modal data and general tasks. This can be outlined in a general framework with three components: model, input, and output (Fig. 2).

Model

AI models often operate as a “black box”21, neglecting the underlying physical mechanisms. To address this issue, we propose a general AI framework, which consists of three parts: the encoder for feature extraction; the feature fusion module for the fusion of diverse features; and the decoder for the reconstruction of output features. The key innovation lies in the utilization of prior knowledge and the integration of major cutting-edge AI models, such as CNN, transformer, RNN, graph neural network (GNN), and generative adversarial network (GAN). Different AI models can serve as encoders or decoders based on their strengths in feature representation. Prior knowledge can be integrated at different stages. At the input stage, it can enrich and integrate prominent features, reducing redundancy, such as remotely sensed spectral indices. During the modeling, prior knowledge can be accounted for in network weights through model pre-training or fine-tuning. At the output stage, it can guide the learning process and provide more reliable outputs, e.g., by the addition of spatial-temporal weighted terms. This knowledge-driven approach enhances the model interpretability and generalization and compensates for limited training data.

Input data

These EO data are characterized by diverse spectral, spatial, and temporal resolutions and broad spatial coverage, enabling long-term urban monitoring. Within the AI framework, prior knowledge complements raw data, especially when the available input data is limited. The type of prior knowledge to be incorporated depends mainly on research objectives, geospatial relationships, urban attributes, and temporal patterns (see Fig. 2).

Output data

The output is application-specific, ranging from image pre-processing and interpretation to parameter estimation. Utilizing an appropriate model informed by practical urban knowledge yields more accurate and comprehensive insights, contributing to more effective urban sensing and imaging.

Urban mapping

AI can handle different types of data, including text, audio, image, and video, and can integrate them to produce more accurate results than traditional methods. It enhances data interpretation capabilities and helps make informed decisions in various fields. It has revolutionized the field of urban mapping by processing and analyzing various types of data. In this section, we will discuss three applications of AI in urban mapping: land use and land cover (LULC) mapping, building detection, and road extraction.

LULC mapping has long been a hot topic and is evolving with deep learning22. The exceptional performance of deep learning in LULC mapping is due to several factors. First, deep learning eliminates the need for manual feature engineering due to the inherent ability of the models to learn directly from data. Second, deep learning enhances the ease of incorporating heterogeneous multi-modal data into the mapping process. Third, deep learning can generate diverse output types, such as point-level categories, segmented objects, and bounding boxes23. Nevertheless, deep learning is data-driven and relies heavily on labeled data. In addition, although diverse LULC products have been developed for local or global regions, there exist considerable uncertainties and inconsistencies. Urban green spaces (UGS), as a special type of land cover, play an important role in understanding urban ecosystems, climate, environment, public health concerns, and the SDGs at various spatial scales. Mapping of UGS with remote sensing is challenging due to the existence of mixed pixels and the cost and time of collecting quality training data. CNN and other deep learning methods have been employed for UGS mapping and found them effective24.

Building detection is one of the most profoundly advanced areas of EO-based deep learning. Historically, building feature-based methods have been developed to advance automated building detection25, but they rely on domain-specific knowledge to manually design building- related features to be detected and mapped. Deep learning, trained using existing open-source databases obtained by citizen science, have become a mainstream for building detection26. For instance, Microsoft has released a global building footprints dataset generated by deep learning networks, which was almost impossible to achieve in the past, yet the completeness of this dataset still needs attention.

Similarly, AI has made it possible for automatic extraction of roads. For example, the foundation model has been utilized to extract road networks by employing autoencoders and contrastive learning for self-supervised training on large-scale unlabeled remote sensing images27. Parameter-efficient fine-tuning methods were used to apply these general foundation models for road extraction tasks. Because self-supervised training learns the distribution of vast amounts of data, the model’s feature representation capabilities are significantly enhanced, thereby improving the performance of road extraction. Cross-modal learning has also been applied to road extraction tasks28. For instance, GPS data is used to address the issue of insufficient road data labels to some extent. AI methods are still constrained in road detection and mapping in several aspects. First, there is a lack of an accurate and diverse training dataset for global-scale road mapping29. Second, the generalization ability of AI models remains limited for global applications. Third, the lack of inductive reasoning ability for AI models leads to disconnected roads, which may lead to inaccurate conclusions in road network-based urban studies. AI methods focus mainly on recognizing individual pixels as roads, rather than inferring road connectivity according to the cognitive process applied by human beings30.

Urban observing and sensing

Following the discussion on the three widest applications in urban mapping, where optical remote sensing methods are primarily utilized, this chapter focuses the discussion on urban observation and sensing with other sensing systems and platforms, such as LiDAR, Synthetic Aperture Radar (SAR), street-level imagery, as well as people as virtual sensors.

LiDAR technology offers exceptional 3D data acquisition capabilities for urban landscapes, structures and infrastructure, as well as monitoring changes over time. Small-footprint airborne LiDAR delivers high-resolution topographic data, excelling at generating detailed 3D urban environment models31. Integrating AI with LiDAR data processing enables sophisticated classification and analysis of urban features. For instance, AI models trained on CNNs have improved interpreting and merging information from these diverse sensor modalities, thereby enhancing point semantic labeling and classification accuracy32. Nonetheless, the fusion of LiDAR with other sensors to improve information retrieval with the existence of occlusion from LiDAR viewing geometry poses significant challenges for urban applications. To address these challenges, cross-modal learning strategies leverage LiDAR data combined with visual and thermal imagery to compensate for areas where LiDAR data is incomplete or obstructed, thereby enriching the dataset33. In addition, self-supervised learning models have been utilized, autonomously predicting missing or noisy data sections based on patterns identified in complete and clean sections34. This approach enhances data quality and facilitates learning from the intrinsic structure of LiDAR data without relying on manually labeled examples, which is particularly beneficial for managing large datasets and standardizing data quality across different systems.

SAR, featuring all-weather capability, rapid revisit, and multi-angle observations, is an important EO technology. Increasing accessibility of SAR has significantly enabled the application of AI for urban sensing and mapping35. Additionally, interferometric SAR (InSAR) techniques are used to process and analyze multitemporal SAR, enabling accurate measurements of urban surface and infrastructure deformation. Compared to optical images, SAR exhibits distinct characteristics, including speckle noise, multipath scattering, and geometrical distortions, which negatively impact their interpretation. These issues also pose challenges for AI-based analysis of SAR images in conjunction with optical ones.

The potential of street-level imagery has been advanced with AI for data mining and knowledge discovery10. For example, it is possible to evaluate the conditions of urban infrastructure through semantic segmentation methodologies36. Deeper insights, including safety, architectural age and style, and the urban socio-economic environment are also available through AI37. Despite these advancements, challenges remain, such as in the integration of street-level with satellite/airborne data. Satellite/airborne sensing provides a large-scale perspective but is limited to top-down or oblique observations, while street-level imagery offers a ground-based observation from a human’s perspective.

The aforementioned EO technologies have traditionally been applied to study static objects such as LULC. Recently, massive geo-tagged data on dynamic objects (e.g., human behaviors) have been generated by physical and people sensors (people as virtual sensors). These data, such as GPS trajectories, surveillance data, urban environment data (e.g., temperature and air quality data) and human-generated data, are mostly associated with geo-locations, capturing urban dynamics (e.g., human movements, urban events and processes) from different angles. They provide multi-dimensional EO data in a granular manner, which have greatly catalyzed the application of AI techniques to urban sensing38. For instance, AI has been widely applied to GPS trajectories and urban environment data, which has significantly improved human movement prediction39 and urban environment change forecasting40. Nevertheless, challenges such as fusing the geo-tagged data with the EO data for effective AI modeling remained due to their spatiotemporal scale differences and the qualities of measurement.

Human-generated data can be categorized into passive sensing (e.g., data generated from social media, location-based services, and mobile devices) and active sensing (e.g., Public Participation Geographic Information Systems (PPGIS), Volunteered Geographic Information Systems (VGIS), and surveys). These data sources offer diverse insights into human activities and behaviors, as well as other human factors related to social sustainability, such as environmental experiences, perceptions, and needs. Social media data, for instance, can be used to analyze social phenomena such as segregation, while PPGIS data can help identify community needs and preferences regarding urban planning.